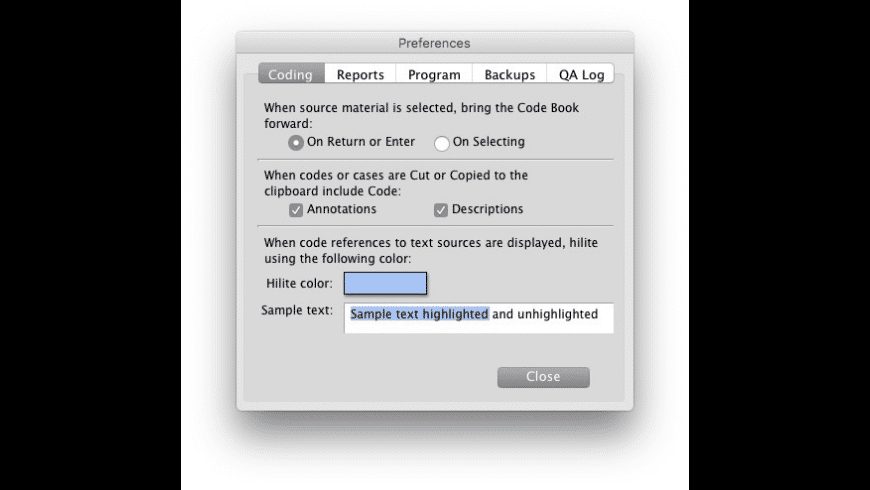

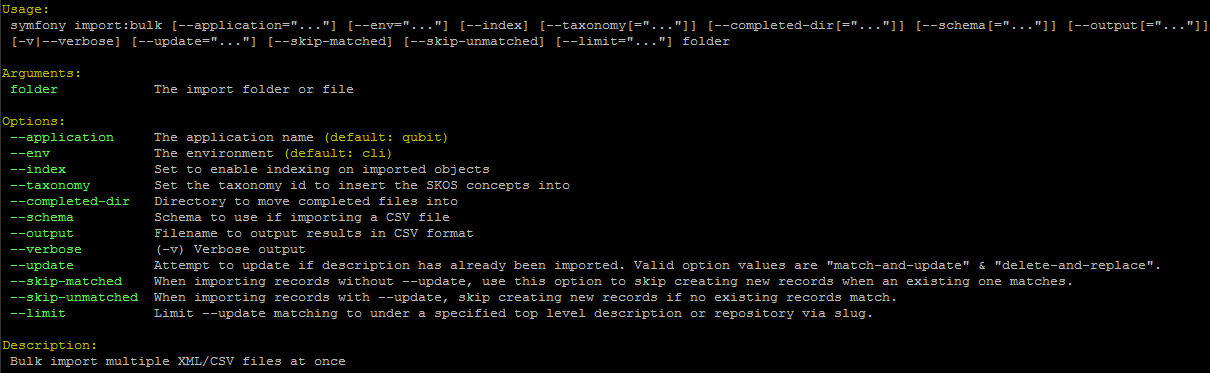

The default uses F.nll_loss because it assumes the user used LogSoftmax but feel free to edit the loss to tailor to your needs. As a last step, depending on the last layer in your model, you may wish to edit the train_one_epoch() method in the hyperband.py file. software quirkos maxqda dedoose raven s eye qiqqa webqda hyperresearch transana f4analyse annotations datagrav are some of the top qualitative data analysis software.Increase to receive faster results at the cost of a sub-optimal performance. Larger values of nu correspond to a more aggressive elimination schedule and thus fewer rounds of elimination. It's mostly a rule fo thumb, but something in the range epochs. Set max_iter to the usual amount you would train neural networks for. This is useful if you want to speed up HyperBand and don't want to evaluate a full pass on a large dataset. epoch_scale: a boolean indicating whether max_iter should be computed in terms of mini-batch iterations or epochs.eta: proportion of configs discarded in each round of successive halving.max_iter: maximum number of iterations allocated to a given hyperparam config.There are 2 parameters that control the HyperBand algorithm: Concretely, you can edit the hyperparameters of HyperBand, the default learning rate, the dataset of choice, etc.

Edit the config.py file to suit your needs.choice means pick from elements in a list and means False while the other choice, implicitly implied to mean true, means to sample Dropout probability from a uniform distribution with lower bound 0.1 and upper bound 0.5. Values are of the form where distribution can be one of uniform, quniform, choice, etc.įor example, 2_hidden: means to sample the hidden size of layer 2 of the model ( Linear(in_features=512, out_features=256)) from a quantile uniform distribution with lower bound 512, upper bound 1000 and q = 1.Īll_dropout:, ]] means to choose whether to apply dropout or not to all layers. Keys are of the form where layer_num can be a layer from your nn.Sequential model or all to signify all layers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed