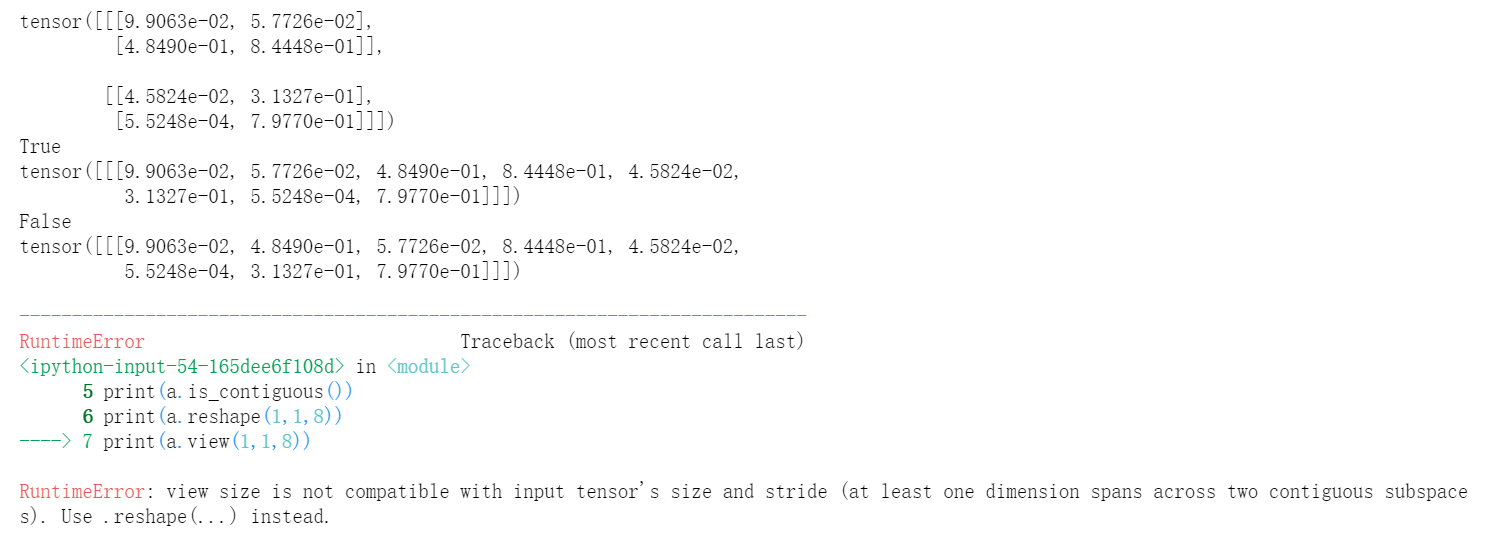

You can also use negative indexing to do the same thing as in: In : aten. Useful when precision is important at the expense of range. Data types Torch defines 10 tensor types with CPU and GPU variants which are as follows: 1 Sometimes referred to as binary16: uses 1 sign, 5 exponent, and 10 significand bits. import torch1reshape2view3permute4transpose5T1reshapeNumPytorchtorch. # since we permute the axes/dims, the shape changed from (2, 3) => (3, 2) A torch.Tensor is a multi-dimensional matrix containing elements of a single data type. The below example will make things clear: In : aten You can user permute() to deal with these in a fairly. I.e, a.permute (0,2,3,1) will be of shape torch.Size ( 1, 2, 2, 5) which fits the shape of b (torch.Size ( 5)) for matrix multiplication, since the last dimention of a equals the first dimention of b. width, channel tensors, but PyTorch prefers to deal with these in a channel, height, width. Whereas tensor.permute() is only used to swap the axes. The permute () function transposes the dimention in the order of it's arguments. Some data come in HWC (height, width, channel) format, but PyTorch needs CHW format. This can be viewed as tensors of shapes (6, 1), (1, 6) etc., # reshaping (or viewing) 2x3 matrix as a column vector of shape 6x1Īlternatively, it can also be reshaped or viewed as a row vector of shape (1, 6) as in: In : aten.view(-1, 6) For example, our input tensor aten has the shape (2, 3). Torch.view() reshapes the tensor to a different but compatible shape. PyTorch uses transpose for transpositions and permute for permutations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed